4.) Girsanov Thoerem (Change of measure) & its application

Brief Intro to Multivariate Normal Distribution

(i) Motivation

大家有冇發現我地目前為止其實只係model過single asset嘅dynamics?

因為我地price過嘅deriv全部都係得一隻underlying

所以我地暫時係冇考慮過多過一隻underlying嘅情況可以點搞

而當咁多隻underlying全部都有correlation就更加唔知點處理

大家諗下都知 現實啲deriv咁撚複雜 邊有可能剩係model一隻underlying嘅dynamics就夠

隻deriv隨時可能係base on 3-4隻underlyings 仲未計啲痴線payoff

所以如果我地想繼續講落去 就一定要知道點樣同時model幾隻有correlation嘅underlyings (e.g. Apple and Google, S&P500 and SX5E, EUR and CHF)

咁我地就可以price到啲比較複雜嘅deriv

(ii) Background

(ii) Background

咁唔知大家仲記唔記得

我地暫時consider過嘅model入面 其實所有嘅randomness都係嚟自Wiener Process

(Black-Scholes同Vasicek入面嘅randomness都係嚟自Wiener Process)

假設我地想model 2隻有correlation嘅underlyings dynamics

咁好自然我地就需要兩條correlated嘅Wiener Processes

然後再各自用自己嘅 r 同 σ 砌到變做兩條 in Q measure 嘅 SDE

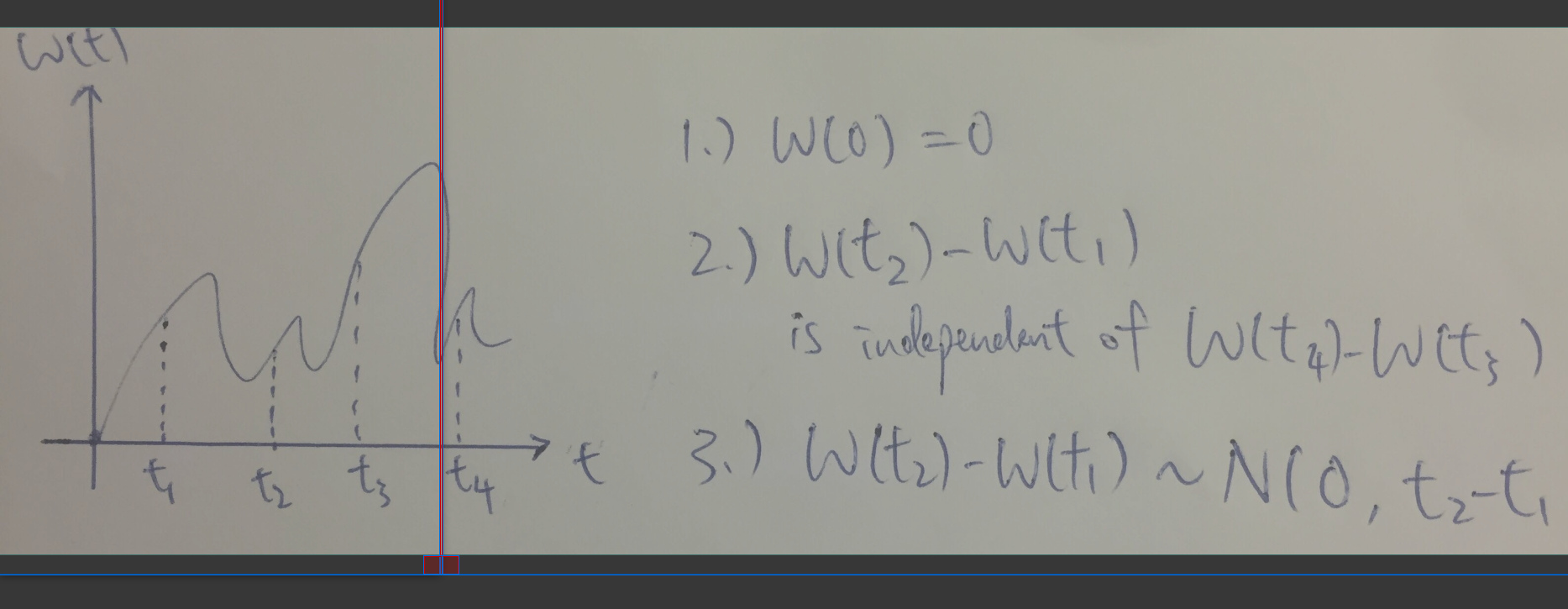

而大家仲記唔記得Wiener Process嘅definition係乜?

只要符合曬以下三點就可以叫做wiener process

1.) Start at 0 , i.e. W(t_0) = 0

2.) Stationary Increment, i.e. W(t+h) - W(t) ~ N(0,h)

3.) Independent Increment, i.e. W(t_4) - W(t-3) independent with W(t_2) - W(t_1) [where t_1 < t_2 < t_3 < t_4]

如果我地將 2.) 入面嘅 t = 0 再加埋 1.)

咁我地就會得到 W(h) ~ N(0,h)

所以

好大概咁講 整兩條correlated嘅wiener processes其實就好似整兩粒correlated嘅Normal R.V.s 咁

正正因為咁 我地首先就要研究咗有correlation嘅Normal R.V.s (i.e. Multivariate Normal)係啲乜鬼嘢嚟先

答咗條簡單啲嘅問題先 然後再類比上去

(利申: 好rigorous嘅數同proof呢度就唔提供 亦都提供唔到

有興趣嘅強烈建議自己睇書)

(iii) Multivariate Normal Distribution

如果依家我話 X follows Normal Distribution, i.e. X ~ N( μ , σ^2) 咁我諗大家都好易理解

Multivariate Normal顧名思義就係多過一粒 R.V. (e.g. X_1 , X_2) 而佢地jointly咁follow (multivariate) normal distribution

如果真係有兩粒 R.V.s , i.e. X_1 and X_2 , 咁呢個case我地就會話 X_1 and X_2 follows a Bivariate Normal Distribution (with certain correlation or covariance)

當然 X_1 同 X_2 各自都係follow Normal Distribution 但係in general佢地嘅mean同variance都會唔同

咁我地應該點用鬼畫符去表達 "X_1 and X_2 follows a Bivariate Normal Distribution"

其實唔難

Linear algebra is our friend

只要將 X_1 同 X_2 寫做一支 (random) vector 就可以表達到個意思

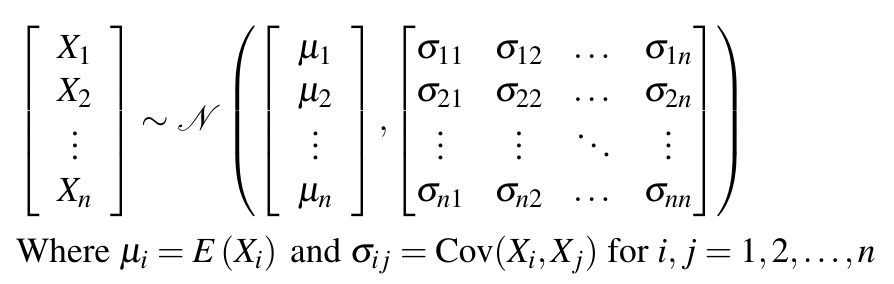

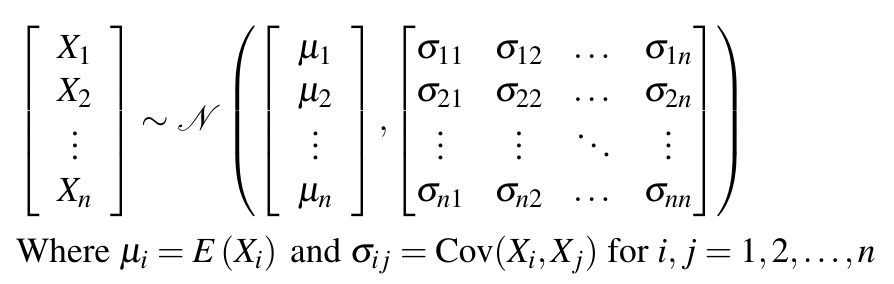

而 in general 支random vector 就會好似下圖咁

每一粒 X_i 都有各自嘅mean同variance 而佢地互相都有correlation (或者應該講有covariance)

而X_1, X_2 , ... , X_n 夾埋一齊就係follow Multivariate Normal Distribution

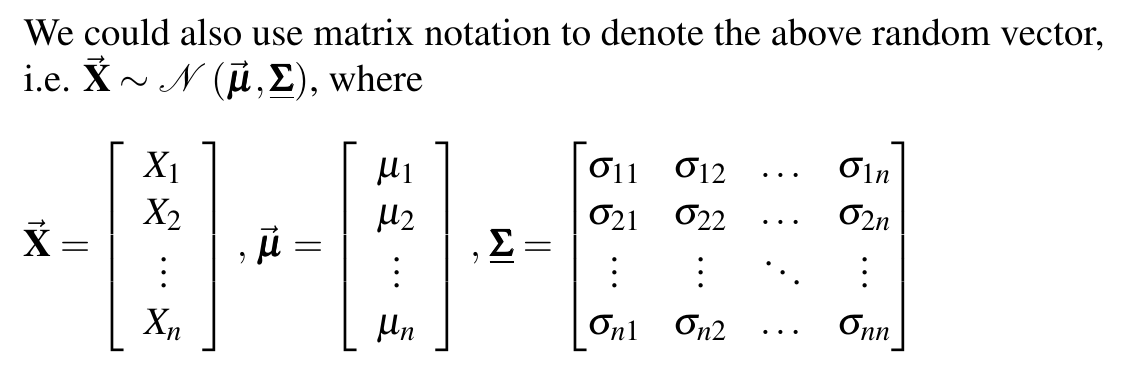

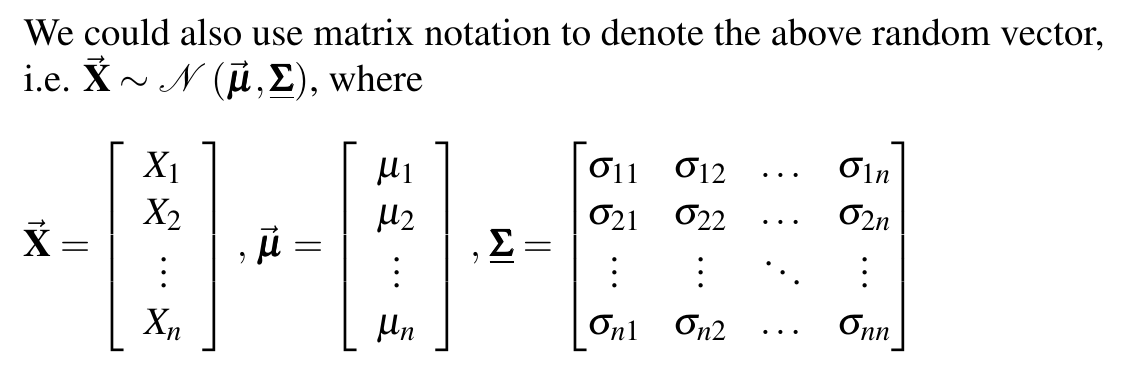

為咗方便起見 我地都可以用matrix/vector notation去寫低同樣嘅嘢

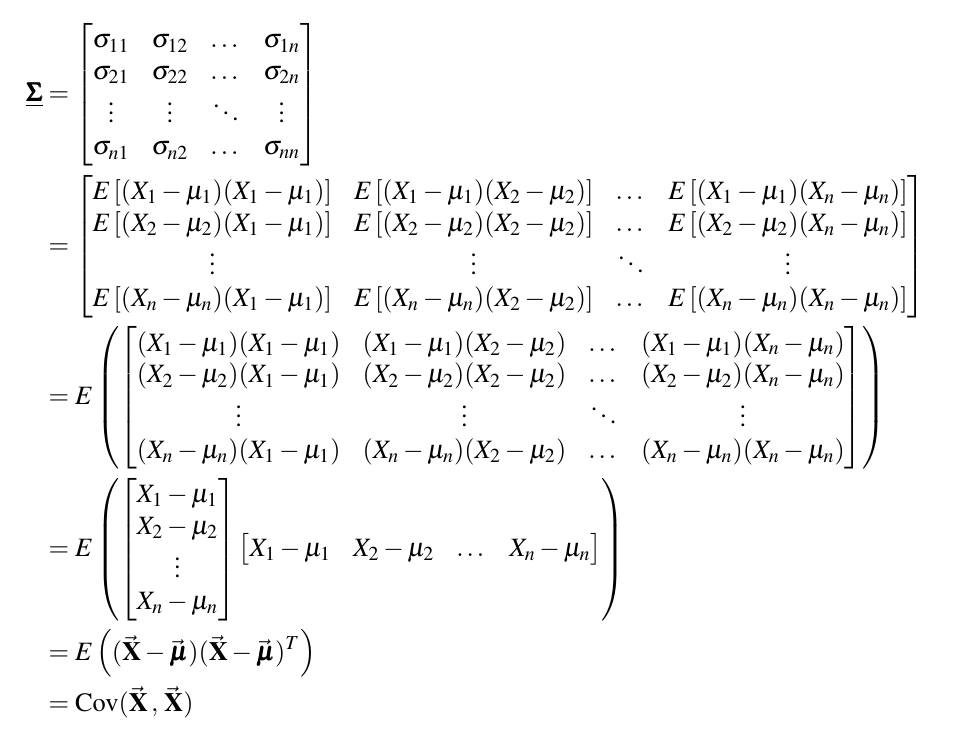

Σ 呢個matrix其實有一個名堂 就係Variance-Covariance Matrix

點解有呢個名都應該好易明

因為 Σ 入面有齊曬 X_1 到 X_n 嘅 var 同 cov

而呢個Var-Cov matrix永遠都係symmetric [Since Cov( X_i , X_j) = Cov( X_j , X_i)]

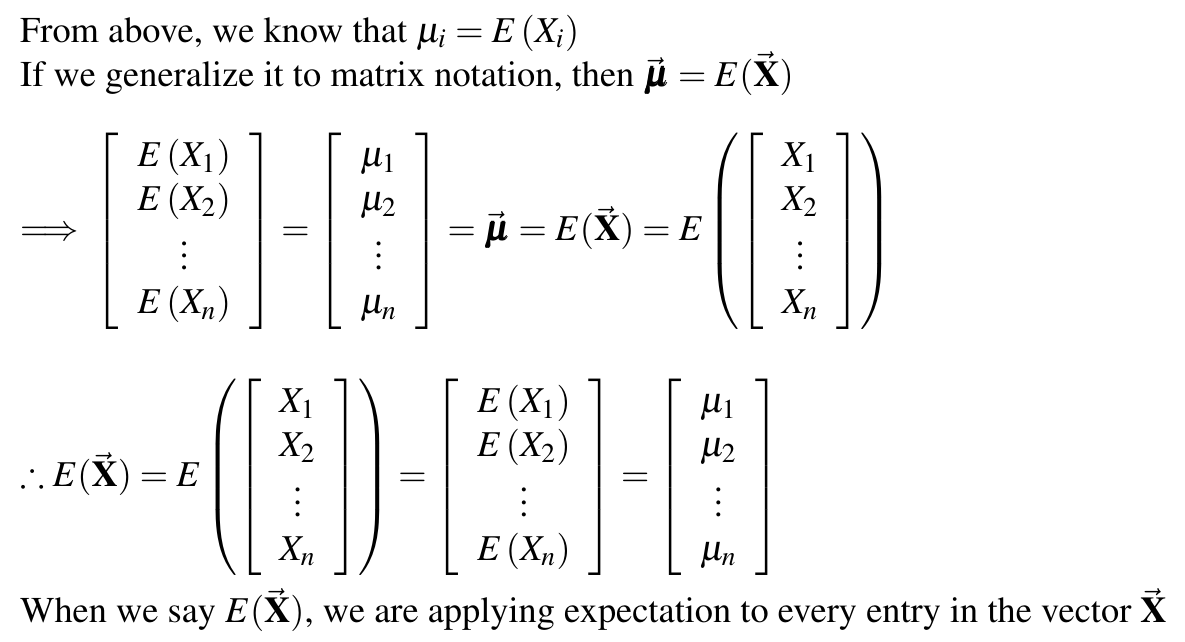

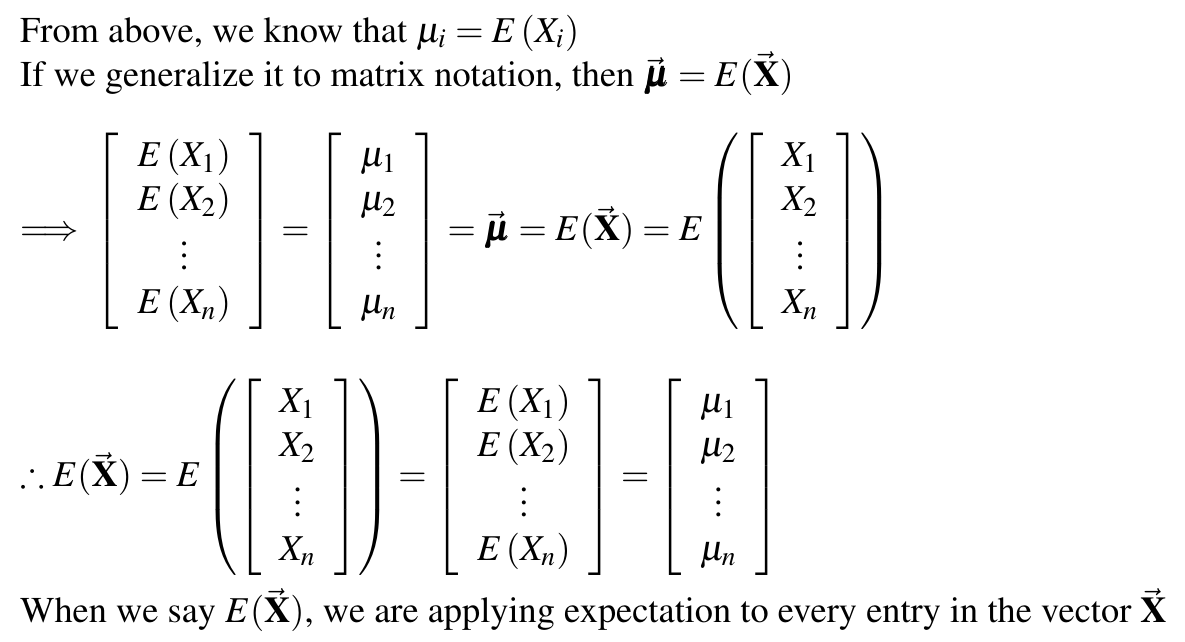

下一樣我地有興趣知嘅嘢就係under呢啲matrix notation我地應該點take expectation

當 X 係一個matrix/vector嘅時候 究竟 E( X ) 係一個點嘅operation?

剩係根據我上面寫低咗嘅嘢其實仲係有少少ambiguity 所以用下圖清楚define咗先

(p.s. Variance 同 Covariance 其實都係take緊expectation 所以define清楚點take expectation就足夠)

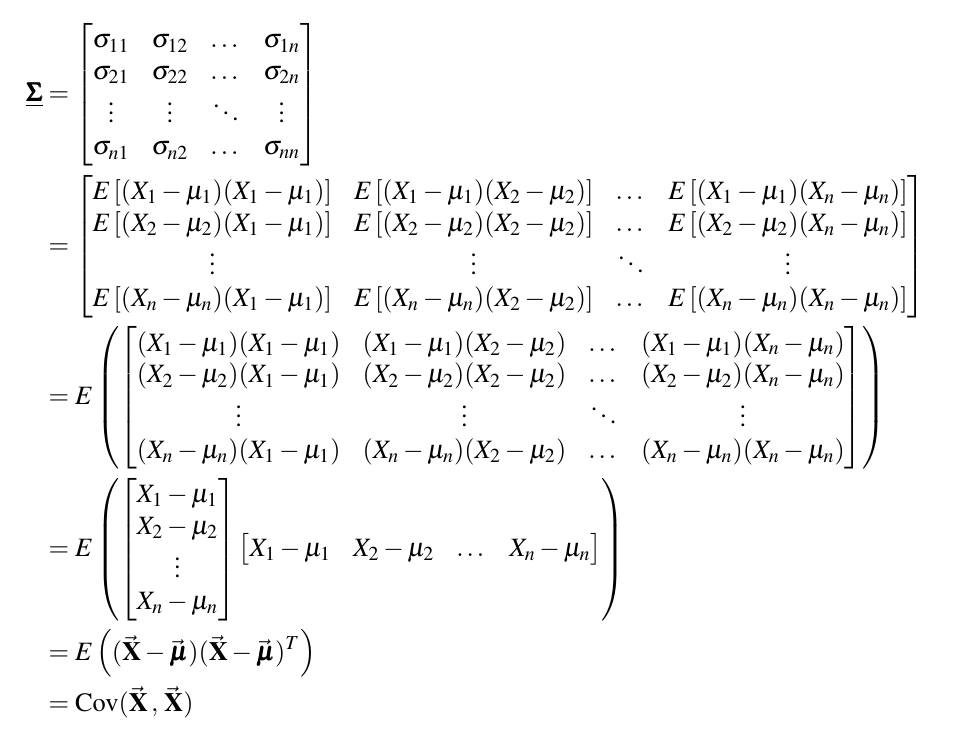

而variance-covariance matrix亦都可以用expectation of 一舊matrix咁嘅form寫低

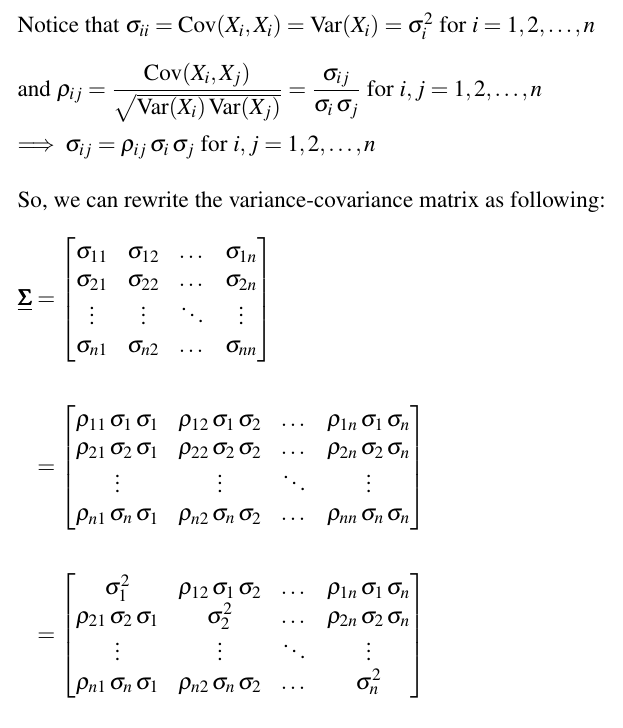

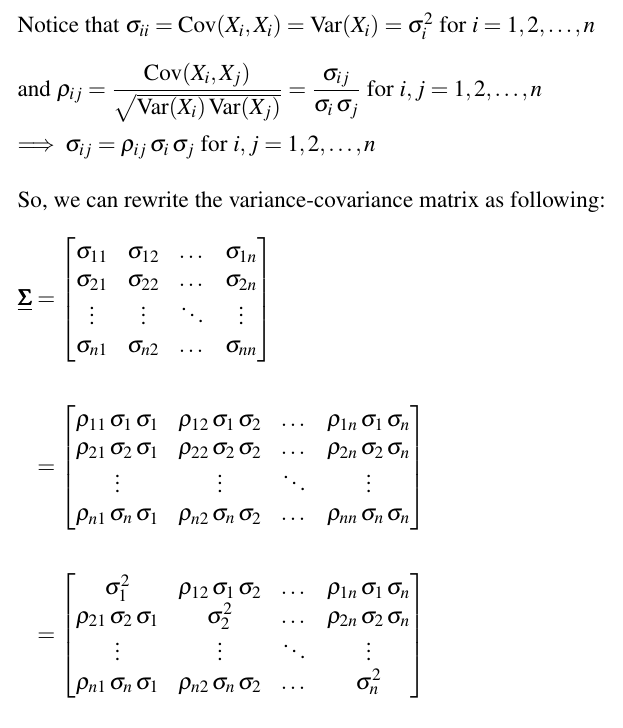

假設我地依家唔知covariance 但係specify咗 X_1 到 X_n 互相嘅correlation

(當然assume埋每粒 X_i 自己嘅 Var 都係given)

咁 Var-Cov matrix Σ 都可以跟住變一變樣

如果大家想睇埋pdf mgf characteristic function嗰啲就上wiki再詳細睇

https://en.wikipedia.org/wiki/Multivariate_normal_distribution#Density_function

https://en.wikipedia.org/wiki/Multivariate_normal_distribution#Density_function

篇幅有限只能夠大概講一講個框架

-------------------------------------------

又爆字數

下個cm繼續

一個cm得4000字其實真係唔夠

成日都爆

上面我地介紹咗multivariate normal基本嘅setting係點

跟住嘅cm就會講我地究竟可以點樣generate一啲jointly follow multivariate normal嘅R.V.s

然後就向上推一層 擴展到點樣generate一啲有correlation嘅Wiener processes

咁我地就有足夠嘅background去應付埋Girsanov Theorem最尾嘅兩個例子

其中一個例子係exchange option 而最尾嗰個example我係度賣個關子先

有興趣嘅強烈建議自己睇書)

有興趣嘅強烈建議自己睇書)

Linear algebra is our friend

Linear algebra is our friend

因為 Σ 入面有齊曬 X_1 到 X_n 嘅 var 同 cov

因為 Σ 入面有齊曬 X_1 到 X_n 嘅 var 同 cov

下個cm繼續

下個cm繼續

成日都爆

成日都爆

)

) , maybe I could do that in this summer

, maybe I could do that in this summer

Are you doing the internship in London?

Are you doing the internship in London?